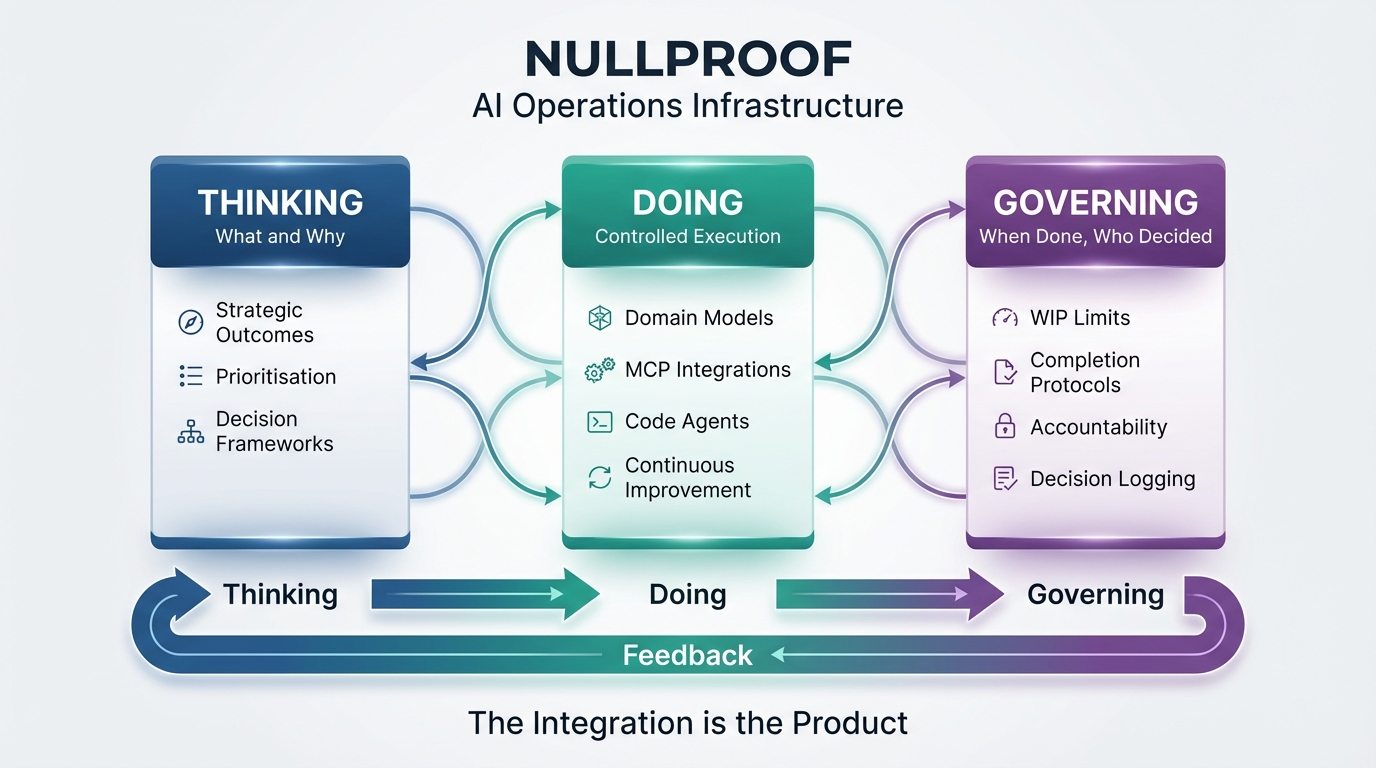

The Creative Operations Stack

In a 14-week single-studio pilot, decision latency fell by roughly 68% and no projects went unreviewed. Three integrated frameworks for managing AI-augmented creative work.

Fourteen weeks ago, I started measuring where my time actually went. Not the broad categories — “admin,” “creative work,” “planning” — but the specific transitions. How long between deciding what to work on and actually working. How many things sat half-finished at any given moment. Which projects aged indefinitely while urgent-but-trivial tasks kept jumping the queue.

The pattern that emerged wasn’t what I expected. AI had genuinely accelerated everything that could be automated — first drafts in minutes, research in an afternoon, visual concepts faster than I could evaluate them. But the parts requiring human judgment hadn’t sped up at all. If anything, they’d gotten harder, because there was simply more to evaluate, more to prioritise, more to decide whether it was actually done.

The production bottleneck had dissolved. In its place: a governance bottleneck.

The Results

Before building a framework, I measured the baseline. After fourteen weeks running the system, I measured again. These are self-reported metrics from my own tracking; your experience will differ.

Decision latency: 25 minutes → 8 minutes in my tracking. That’s the time from “what should I work on?” to actually working. Before: reviewing calendars, reconstructing context, weighing priorities. After: check the prioritised list, read the “next action” note, begin.

Work-in-progress: stable throughout the pilot. Active items held at 5-6 (high-frequency), 10-12 (low-frequency), 15-18 (dormant). No gradual inflation. No emergency weeks where everything became urgent simultaneously.

Unreviewed projects: none during the pilot period. Every aged item surfaced for review as designed. Eight ideas resurfaced from the backlog — work that would likely have been forgotten in a traditional system.

Throughput: 4-6 completions per week. Sustainable rhythm, not heroic bursts followed by recovery.

These numbers came from a system I built for myself. The rest of this article explains how it works — for readers who want the detail behind the results.

The Problem It Solves

When AI compresses delivery from days to minutes, every surrounding system feels the strain. Approval workflows designed for weekly cycles can’t keep pace. Planning built around month-long projects doesn’t make sense when the work takes an afternoon. Quality checks built for scarcity become overwhelmed by abundance.

The result: organisations that can generate more than they can decide, produce more than they can evaluate, start more than they can finish.

You can’t solve this with more AI. It’s a structural problem — how work flows through decisions, not how fast it gets produced.

The Framework

Three connected components. Each addresses a specific failure mode; together they form a feedback loop.

Thinking connects daily tasks to strategic purpose. Doing handles scheduling and execution. Governing focuses on completion. The key insight: these three need to be connected. Thinking without Doing is strategy that never executes. Doing without Governing is activity that never finishes. Governing without Thinking is process for its own sake.

Beyond Creative Studios

The framework started in a creative context — photography, content, brand development. But the underlying problem may appear wherever AI has accelerated production while human judgment remains the constraint.

Consider contexts like insurance operations, where AI might draft decisions quickly but approvals still take time. Or financial services, where quotes can be instant but exceptions queue up. Or marketing teams, where AI generates many variants but deciding which one ships remains difficult.

I don’t know yet how well this transfers. That’s partly why I’m writing about it.

What I’m Looking For

I’m looking for a creative studio to pilot the full system.

You:

- 1-10 person team

- Multiple parallel workstreams

- Already using AI tools

- More output than clarity, more starts than finishes

- Willing to share before/after metrics

What you get:

- Implementation support

- Custom process definitions for your workflows

- 6-week pilot with weekly check-ins

- Measurement of decision latency, WIP, throughput

What I ask:

- Permission to use anonymised metrics as case study (subject to your written approval before any publication)

- Candid feedback on what works and what doesn’t

- Introduction to 2-3 peers if successful

Pilot is offered at no cost for qualified studios, in exchange for feedback and case study participation. We’ll only publish aggregated or anonymised metrics with your explicit agreement. Please don’t share any third-party confidential information you’re not authorised to disclose.

This is research, not a product pitch. I have something that works for me. I want to know if it works for others.

Interested? Get in touch via the product page or connect on LinkedIn.